Conditional Probability

Imagine you're trying to predict the chance of rain today. You might start with a basic probability based on the weather forecast. But then you notice dark clouds rolling in—suddenly, the odds of rain feel higher because you have new information. This is where conditional probability comes in: it’s about updating probabilities when you know something extra has happened.

Think of it like a game with a bag of colored balls—say, 5 red, 3 blue, and 2 green (10 total). The chance of picking a red ball is 5 out of 10, or \(\frac{5}{10} = \frac{1}{2}\). Now, suppose someone tells you they’ve already removed all the blue balls. The bag now has 5 red and 2 green (7 total), so the chance of picking a red ball jumps to \(\frac{5}{7}\). That’s conditional probability: the probability of an event (picking red) given that another event (blue balls removed) has occurred.

Formally, conditional probability is the likelihood of one event, say \(F\), happening given that another event, \(E\), has already taken place. We write it as \(\PCond{F}{E}\), pronounced “the probability of \(F\) given \(E\).” It’s a way to refine our predictions with new context, and it’s used everywhere—from weather forecasts to medical tests.

Think of it like a game with a bag of colored balls—say, 5 red, 3 blue, and 2 green (10 total). The chance of picking a red ball is 5 out of 10, or \(\frac{5}{10} = \frac{1}{2}\). Now, suppose someone tells you they’ve already removed all the blue balls. The bag now has 5 red and 2 green (7 total), so the chance of picking a red ball jumps to \(\frac{5}{7}\). That’s conditional probability: the probability of an event (picking red) given that another event (blue balls removed) has occurred.

Formally, conditional probability is the likelihood of one event, say \(F\), happening given that another event, \(E\), has already taken place. We write it as \(\PCond{F}{E}\), pronounced “the probability of \(F\) given \(E\).” It’s a way to refine our predictions with new context, and it’s used everywhere—from weather forecasts to medical tests.

Definition

Let’s explore conditional probability with a two-way table showing 100 students’ preferences for math, split by gender:

| Loves Math | Does Not Love Math | Total | |

| Girls | 35 | 16 | 51 |

| Boys | 30 | 19 | 49 |

| Total | 65 | 35 | 100 |

- Probability the student is a girl:$$\begin{aligned}P(\text{Girl}) &= \frac{\text{Number of girls}}{\text{Number of students}} \\ &= \frac{51}{100}.\end{aligned}$$

- Probability the student loves math and is a girl:$$\begin{aligned}P(\text{Loves Math and Girl}) &= \frac{\text{Number of girls who love math}}{\text{Number of students}} \\ &= \frac{35}{100}.\end{aligned}$$

- Probability the student loves math, given they are a girl:

Since we’re told the student is a girl, we focus only on the 51 girls:$$\begin{aligned}\PCond{\text{Loves Math}}{\text{Girl}} &= \frac{\text{Number of girls who love math}}{\text{Number of girls}} \\ &= \frac{35}{51}.\end{aligned}$$ - Connecting to the formula:

Notice that:$$\begin{aligned}\PCond{\text{Loves Math}}{\text{Girl}} &=\frac{35}{51}\\ &= \frac{35/100}{51/100}\\ &= \dfrac{P(\text{Loves Math and Girl})}{P(\text{Girl})}.\end{aligned}$$This pattern gives us the general rule for conditional probability.

Definition Conditional Probability

The conditional probability of event \(F\) given event \(E\) is the probability of \(F\) occurring, knowing that \(E\) has already happened. It’s denoted \(\PCond{F}{E}\) and calculated as:$$\textcolor{colordef}{\PCond{F}{E} = \frac{P(E \cap F)}{P(E)}}, \quad \text{where } P(E) > 0.$$

Example

A fair six-sided die has odd faces (1, 3, 5) painted green and even faces (2, 4, 6) painted blue. You roll it and see the top face is blue. What’s the probability it’s a 6?

- Sample space: \(\{1, 2, 3, 4, 5, 6\}\), 6 equally likely outcomes.

- Event \(E\) (face is blue): \(\{2, 4, 6\}\), so \(P(E) = \frac{3}{6}\).

- Event \(F\) (roll a 6): \(\{6\}\).

- Intersection \(E \cap F\): \(\{6\}\), so \(P(E \cap F) = \frac{1}{6}\).

- Conditional probability:$$\begin{aligned}\PCond{F}{E} &= \frac{P(E \cap F)}{P(E)}\\ &= \frac{\frac{1}{6}}{\frac{3}{6}}\\ &= \frac{1}{6} \times \frac{6}{3}\\ &= \frac{1}{3}.\end{aligned}$$

- The probability of rolling a 6, given the face is blue, is \(\frac{1}{3}\).

Conditional Probability Tree Diagrams

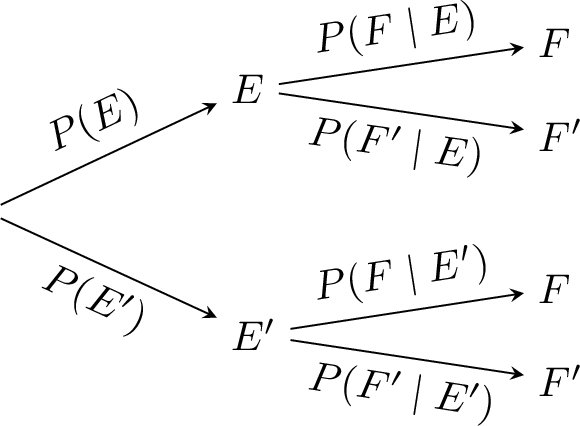

Definition Conditional Probability Tree Diagram

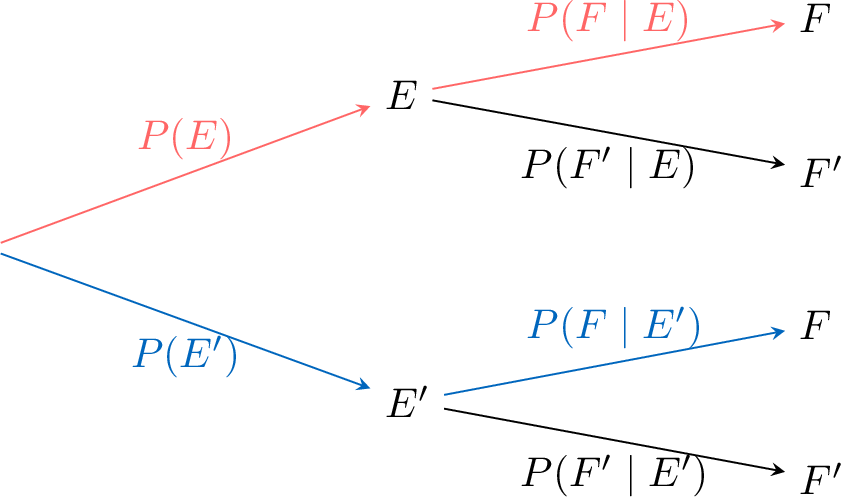

A conditional probability tree visually organizes probabilities for a sequence of events:

- Each branch from a node shows either an unconditional probability (e.g. \(P(E)\)) or a conditional probability (e.g. \(\PCond{F}{E}\)).

- Events are labeled at the end of each branch.

- The probability of an outcome at the end of a path is the product of the probabilities along that path.

Example

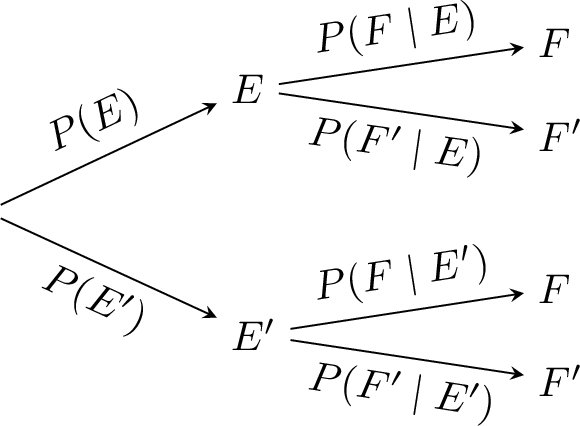

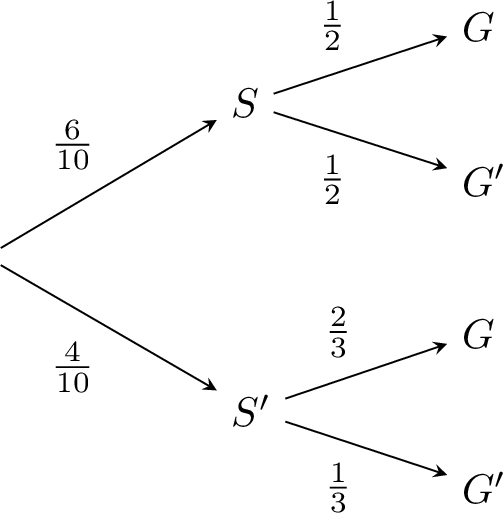

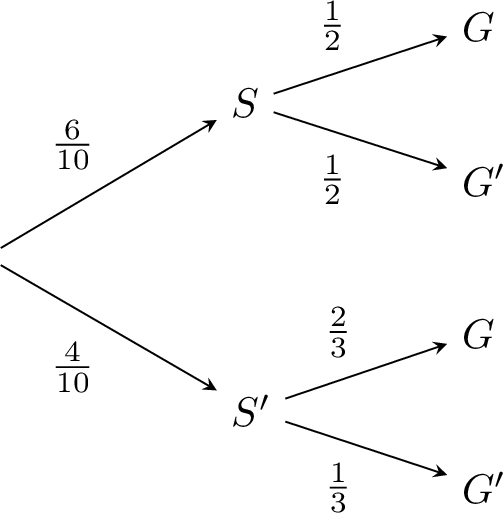

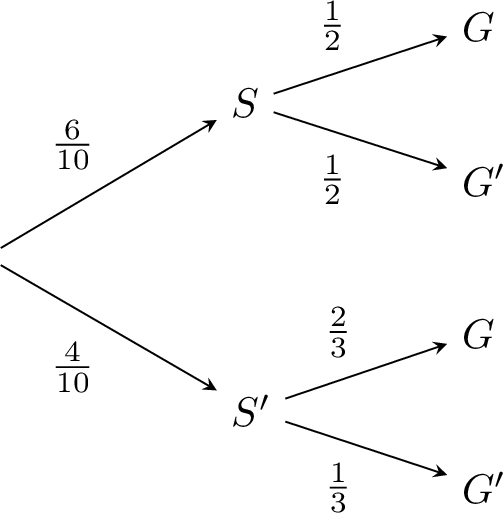

The probability Sam coaches a game is \(\frac{6}{10}\), and the probability Alex coaches is \(\frac{4}{10}\). If Sam coaches, the probability that a randomly selected player is a goalkeeper is \(\frac{1}{2}\); if Alex coaches, it is \(\frac{2}{3}\).

Draw the tree diagram.

Draw the tree diagram.

- Define the events:

- \(S\): Sam coaches.

- \(G\): Player is goalkeeper.

- Define the probabilities:

- \(P(S) = \frac{6}{10}\) and \(P(S') =1 - P(S)= \frac{4}{10}\).

- \(\PCond{G}{S} = \frac{1}{2}\) and \(\PCond{G'}{S} = 1 - \PCond{G}{S} = \frac{1}{2}\).

- \(\PCond{G}{S'} = \frac{2}{3}\) and \(\PCond{G'}{S'} = 1-\PCond{G}{S'}= \frac{1}{3}\).

- Tree diagram:

Joint Probability: \(P(E \cap F)\)

Sometimes we know \(P(E)\) and \(\PCond{F}{E}\) and need the chance both \(E\) and \(F\) happen together—like finding the probability that a student is a girl who loves math. This probability is called the joint probability \(P(E \cap F)\).

Proposition Joint Probability Formula

$$P(E \cap F) = P(E) \times \PCond{F}{E}, \quad P(E \cap F) = P(F) \times \PCond{E}{F}.$$

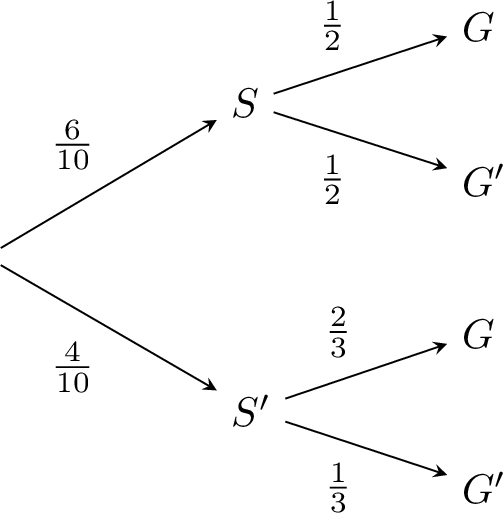

Method Finding \(P(E \cap F)\) in a Tree

- Identify the path where \(E\) and \(F\) both occur.

- Multiply the probabilities along that path.

Example

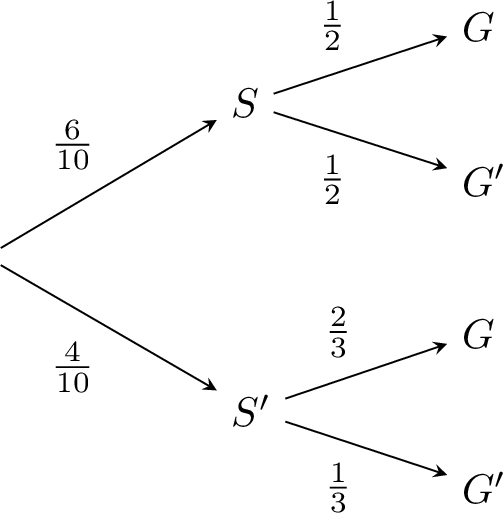

For this probability tree,

- Path: \(S\) to \(G\) (highlighted):

- Calculate:$$\begin{aligned}P(S \cap G) &= P(S) \times \PCond{G}{S}\\ &= \frac{6}{10} \times \frac{1}{2}\\ &= \frac{3}{10}.\end{aligned}$$

Law of Total Probability

Theorem Law of Total Probability

For events \(E\) and \(F\):$$P(F) = P(E) \PCond{F}{E} + P(E') \PCond{F}{E'}.$$This applies when \(E\) and its complement \(E'\) form a partition of the sample space. More generally, if \((E_1,\dots,E_n)\) is a partition of the sample space, then$$P(F) = \sum_{i=1}^n P(E_i)\PCond{F}{E_i}.$$

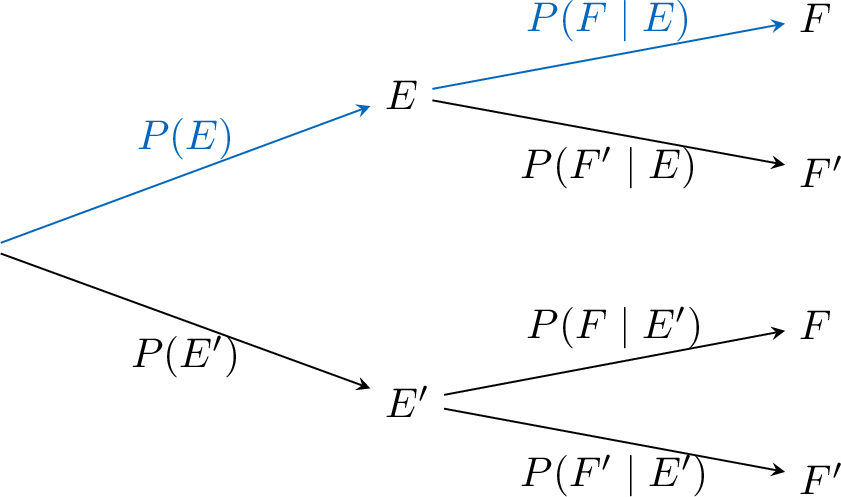

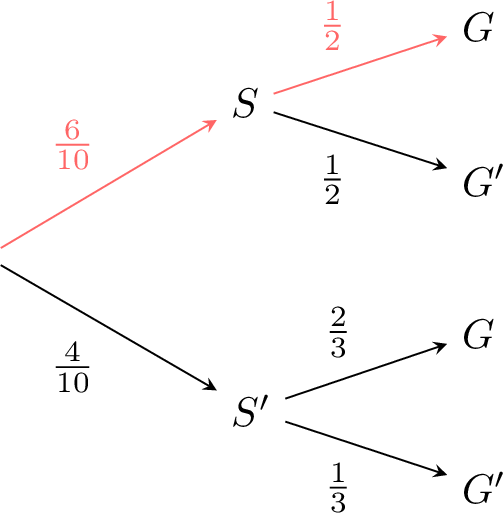

Method Finding \(P(F)\) in a Tree

- Identify all paths to \(F\).

- Multiply probabilities along each path and sum them.

Example

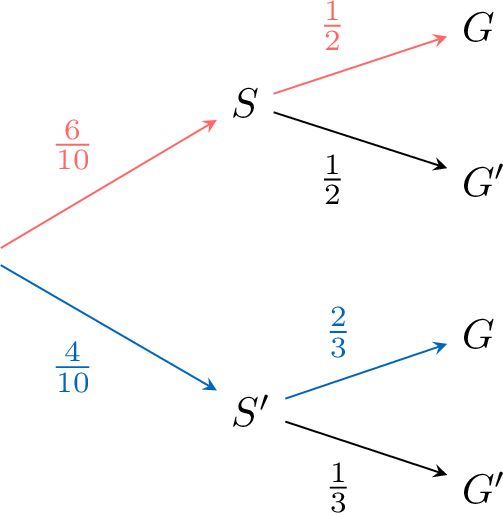

For this probability tree,

- Paths to \(G\):

- Calculate:$$\begin{aligned}P(G) &= \textcolor{colordef}{\frac{6}{10} \times \frac{1}{2}} + \textcolor{colorprop}{\frac{4}{10} \times \frac{2}{3}} \\ &= \textcolor{colordef}{\frac{3}{10}} + \textcolor{colorprop}{\frac{8}{30}} \\ &= \textcolor{colordef}{\frac{9}{30}} + \textcolor{colorprop}{\frac{8}{30}} \\ &= \frac{17}{30}.\end{aligned}$$