Antiderivatives and Differential Equations

Differential Equations

Definition Differential Equation

- A differential equation is an equality relating an unknown function \(y\) of variable \(x\), its successive derivatives \(y', y'', \dots\) and potentially other functions (constants, \(f\), etc.).

- A solution of a differential equation is any differentiable function that satisfies the equality.

Example

The function \(x \mapsto e^{-x}\) is a solution of the equation \(y'' - y = 0\) because, for \(y(x) = e^{-x}\), we have \(y'(x) = -e^{-x}\) and \(y''(x) = e^{-x}\).

Thus, \(y''(x) - y(x) = e^{-x} - e^{-x} = 0\).

Thus, \(y''(x) - y(x) = e^{-x} - e^{-x} = 0\).

Definition Understanding Notations

It is crucial to understand that in a differential equation, \(y\) is a function, not a fixed number. Depending on the context (Mathematics, Physics, or Engineering), we use different notations to represent the same derivative:

- Lagrange notation: Uses a "prime" symbol (\(y'\)). This is the most common shorthand in Math.

- Leibniz notation: Uses a differential ratio (\(\dfrac{dy}{dt}\) or \(\dfrac{dy}{dx}\)). This explicitly shows which variable we are differentiating with respect to (usually time \(t\) or position \(x\)).

- Functional notation: Explicitly writes \(y(t)\) or \(y(x)\) to remind us that the value depends on the variable.

Example

The following three equations represent exactly the same relationship where \(y\) is a function of time \(t\):

- Shorthand style: \(2y' + 3y = t\)

- Functional style: \(2y'(t) + 3y(t) = t\)

- Differential style (Leibniz): \(2\dfrac{dy}{dt} + 3y(t) = t\)

Antiderivative

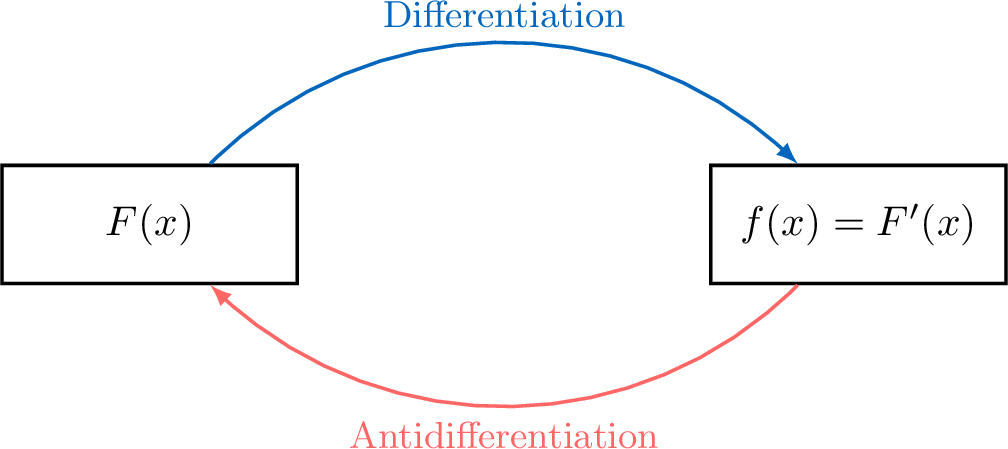

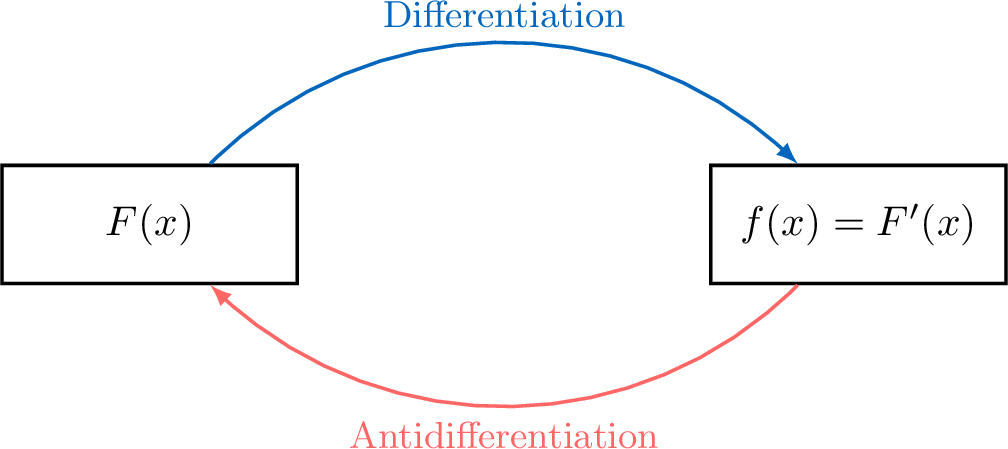

Definition Antiderivative

Let \(f\) be a function defined on an interval \(I\).

We say that \(F\) is an antiderivative (or primitive) of \(f\) on \(I\) if \(F\) is differentiable on \(I\) and for all \(x \in I\):$$ \textcolor{colordef}{F'(x) = f(x)} $$In other words, an antiderivative of \(f\) is a solution to the differential equation \(y' = f\).

We say that \(F\) is an antiderivative (or primitive) of \(f\) on \(I\) if \(F\) is differentiable on \(I\) and for all \(x \in I\):$$ \textcolor{colordef}{F'(x) = f(x)} $$In other words, an antiderivative of \(f\) is a solution to the differential equation \(y' = f\).

Example

Let \(f(x) = 2\) on \(\mathbb{R}\). The function \(F(x) = 2x\) is a primitive of \(f\) on \(\mathbb{R}\) because \(F'(x) = 2 = f(x)\).

Proposition Existence

Every continuous function on an interval \(I\) admits antiderivatives on \(I\).

Proposition General Form

If \(F\) is an antiderivative of \(f\) on an interval \(I\), then any other antiderivative \(G\) of \(f\) on \(I\) is defined by:$$ \textcolor{colorprop}{G(x) = F(x) + C} \quad \text{where } C \in \mathbb{R} \text{ is a constant.} $$

Let \(F\) be a fixed antiderivative of \(f\) on the interval \(I\).

- Analysis: Let \(G\) be any antiderivative of \(f\) on \(I\).

By definition, \(G'(x) = f(x)\) and \(F'(x) = f(x)\).

Consider the function \(H = G - F\). Its derivative is: $$ H'(x) = G'(x) - F'(x) = f(x) - f(x) = 0 $$ Since the derivative of \(H\) is zero on the interval \(I\), then \(H\) must be a constant function.

Therefore, there exists a constant \(C \in \mathbb{R}\) such that \(G(x) - F(x) = C\), which gives \(G(x) = F(x) + C\). - Synthesis: Conversely, let \(G\) be a function defined by \(G(x) = F(x) + C\) for some constant \(C \in \mathbb{R}\).

Then \(G\) is differentiable on \(I\) and \(G'(x) = F'(x) + 0 = f(x)\).

Thus, \(G\) is indeed an antiderivative of \(f\) on \(I\).

Proposition Initial Condition

Let \(f\) be continuous on \(I\) and let \(x_0 \in I\) and \(y_0 \in \mathbb{R}\).

Then there exists a unique antiderivative \(F\) of \(f\) on \(I\) such that \(F(x_0) = y_0\).

Then there exists a unique antiderivative \(F\) of \(f\) on \(I\) such that \(F(x_0) = y_0\).

Let \(G\) be any antiderivative of \(f\) on \(I\).

According to the General Form proposition, any antiderivative \(F\) of \(f\) must be of the form \(F(x) = G(x) + C\) for some constant \(C \in \mathbb{R}\).

The condition \(F(x_0) = y_0\) is therefore equivalent to:$$ G(x_0) + C = y_0 \iff C = y_0 - G(x_0) $$Since \(y_0\) and \(G(x_0)\) are fixed real numbers, this equation has a unique solution for the constant \(C\).

Consequently, there exists one and only one primitive \(F\) satisfying the initial condition.

According to the General Form proposition, any antiderivative \(F\) of \(f\) must be of the form \(F(x) = G(x) + C\) for some constant \(C \in \mathbb{R}\).

The condition \(F(x_0) = y_0\) is therefore equivalent to:$$ G(x_0) + C = y_0 \iff C = y_0 - G(x_0) $$Since \(y_0\) and \(G(x_0)\) are fixed real numbers, this equation has a unique solution for the constant \(C\).

Consequently, there exists one and only one primitive \(F\) satisfying the initial condition.

The Importance of Initial Conditions

In this section, we proved the following fact: if \(f\) is continuous on an interval \(I\), then the differential equation$$y' = f(x)$$has infinitely many solutions (they differ by a constant), and a single initial condition \(y(x_0)=y_0\) selects one and only one solution.

This is a simple example of an idea that appears throughout classical physics: many laws are written as differential equations, and the initial condition specifies the state of the system at a given time.

This is a simple example of an idea that appears throughout classical physics: many laws are written as differential equations, and the initial condition specifies the state of the system at a given time.

- The differential equation represents the law (how the state changes).

- The initial condition represents the initial state (where the evolution starts).

Example Physics Application: The Falling Apple

Consider an apple falling from a tree. Let \(v(t)\) be its velocity at time \(t\). According to Newton's second law, the derivative of the velocity is the constant acceleration due to gravity:$$ v'(t) = -g \quad (\text{where } g \approx 9.8 \text{ m/s}^2) $$The velocity function \(v\) is a primitive of the constant function \(f(t) = -g\).

- Determine the general form of the velocity \(v(t)\).

- If the apple starts from rest at \(t=0\), we have the initial condition \(v(0) = 0\). Find the unique velocity function.

- The general antiderivatives of \(-g\) are of the form \(v(t) = -gt + C\).

- Using the initial condition: \(v(0) = -g(0) + C = 0 \implies C = 0\).

The unique solution is \(v(t) = -gt\).

Antiderivative Formulae

Calculating antiderivatives is essentially the reverse process of differentiation. To find a primitive, we look for a function whose derivative matches the one we are given. Like derivatives, antiderivatives follow specific rules for sums, constant multiples, and certain compositions.

Proposition Linearity

Let \(f\) and \(g\) be two continuous functions on an interval \(I\).

If \(F\) and \(G\) are antiderivatives of \(f\) and \(g\) respectively, then:

If \(F\) and \(G\) are antiderivatives of \(f\) and \(g\) respectively, then:

- Sum Rule: \(F + G\) is an antiderivative of \(f + g\).

- Constant Multiple Rule: \(\alpha F\) is an antiderivative of \(\alpha f\) (where \(\alpha \in \mathbb{R}\)).

Proposition Reference Antiderivatives

Let \(C\) be a real constant.

| Function \(f(x)\) | Antiderivative \(F(x)\) | Interval \(I\) |

| \(k\) (constant) | \(kx + C\) | \(\mathbb{R}\) |

| \(x\) | \(\frac{1}{2}x^2 + C\) | \(\mathbb{R}\) |

| \(x^n\) (\(n\neq -1\)) | \(\frac{1}{n+1}x^{n+1} + C\) | \(\mathbb{R}\) for \(n\geq 0\), \((-\infty,0)\) or \((0, +\infty)\) for \(n\leq -2\) |

| \(\frac{1}{x}\) | \(\ln(x) + C\) | \((0, +\infty)\) |

| \(\frac{1}{x^2}\) | \(-\frac{1}{x} + C\) | \((-\infty, 0)\) or \((0, +\infty)\) |

| \(\frac{1}{\sqrt{x}}\) | \(2\sqrt{x} + C\) | \((0, +\infty)\) |

| \(\sin(x)\) | \(-\cos(x) + C\) | \(\mathbb{R}\) |

| \(\cos(x)\) | \(\sin(x) + C\) | \(\mathbb{R}\) |

| \(e^x\) | \(e^x + C\) | \(\mathbb{R}\) |

Proposition Composition Rules

Let \(u\) be a differentiable function on an interval \(I\).

| Function \(f\) | Antiderivative \(F\) | Condition on \(u\) |

| \(u' \cdot u^n\) (\(n \neq -1\)) | \(\frac{1}{n+1}u^{n+1} + C\) | \(u(x) \neq 0\) if \(n < 0\) |

| \(\frac{u'}{u}\) | \(\ln(u) + C\) | \(u(x) > 0\) |

| \(\frac{u'}{u^2}\) | \(-\frac{1}{u} + C\) | \(u(x) \neq 0\) |

| \(\frac{u'}{\sqrt{u}}\) | \(2\sqrt{u} + C\) | \(u(x) > 0\) |

| \(u' e^u\) | \(e^u + C\) | -- |

| \(u' \sin(u)\) | \(-\cos(u) + C\) | -- |

| \(u' \cos(u)\) | \(\sin(u) + C\) | -- |

Solving Differential Equations

Proposition Solutions of \(y' \equal ay\)

Let \(a\) be a non-zero real number.

The solutions to the differential equation \(y' = ay\) are the functions defined on \(\mathbb{R}\) by: $$ \textcolor{colorprop}{f(x) = K e^{ax}} \quad \text{where } K \in \mathbb{R} \text{ is a constant.} $$

The solutions to the differential equation \(y' = ay\) are the functions defined on \(\mathbb{R}\) by: $$ \textcolor{colorprop}{f(x) = K e^{ax}} \quad \text{where } K \in \mathbb{R} \text{ is a constant.} $$

Every function of the form \(f(x) = K e^{ax}\) is clearly a solution since \(f'(x) = aKe^{ax} = af(x)\).

To prove that all solutions are of this form, let \(y\) be any solution of \(y' = ay\). We look for \(y\) in the form:$$ y(x) = K(x) e^{ax} $$where \(K\) is a function to be determined. Since \(y\) is differentiable, \(K(x) = y(x)e^{-ax}\) is also differentiable. Differentiating \(y\):$$ \begin{aligned}y'(x) &= K'(x)e^{ax} + K(x) \cdot (ae^{ax}) \\ y'(x) &= K'(x)e^{ax} + a \underbrace{K(x)e^{ax}}_{y(x)} \\ y'(x) &= K'(x)e^{ax} + ay(x) \\ y'(x) &= K'(x)e^{ax} + y'(x)\qquad (\text{as } y' = ay) \\ K'(x)e^{ax} &= 0\end{aligned} $$As \(e^{ax} \neq 0\) for all \(x\), it follows that \(K'(x) = 0\).

This implies that \(K(x)\) is a constant \(K\). Thus, \(y(x) = Ke^{ax}\).

To prove that all solutions are of this form, let \(y\) be any solution of \(y' = ay\). We look for \(y\) in the form:$$ y(x) = K(x) e^{ax} $$where \(K\) is a function to be determined. Since \(y\) is differentiable, \(K(x) = y(x)e^{-ax}\) is also differentiable. Differentiating \(y\):$$ \begin{aligned}y'(x) &= K'(x)e^{ax} + K(x) \cdot (ae^{ax}) \\ y'(x) &= K'(x)e^{ax} + a \underbrace{K(x)e^{ax}}_{y(x)} \\ y'(x) &= K'(x)e^{ax} + ay(x) \\ y'(x) &= K'(x)e^{ax} + y'(x)\qquad (\text{as } y' = ay) \\ K'(x)e^{ax} &= 0\end{aligned} $$As \(e^{ax} \neq 0\) for all \(x\), it follows that \(K'(x) = 0\).

This implies that \(K(x)\) is a constant \(K\). Thus, \(y(x) = Ke^{ax}\).

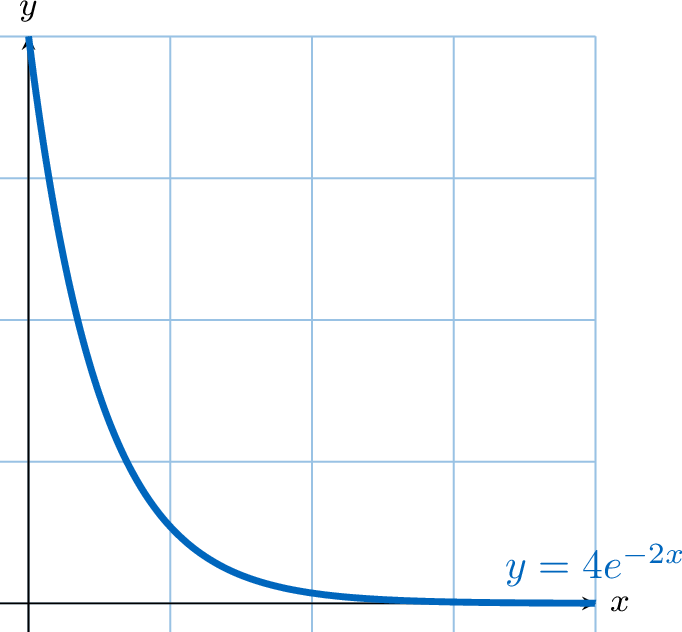

Example

Solve the differential equation \(y' = -2y\) with the initial condition \(y(0) = 4\).

- General solution: The equation is of the form \(y' = ay\) with \(a = -2\). The general solutions are the functions: $$ y(x) = K e^{-2x} \quad \text{where } K \in \mathbb{R} $$

- Particular solution: Using the initial condition \(y(0) = 4\): $$ K e^{-2(0)} = 4 \implies K \times 1 = 4 \implies K = 4 $$ The unique solution is \(y(x) = 4e^{-2x}\).

Proposition Solutions of \(y' = ay + b\)

Let \(a\) and \(b\) be two real numbers with \(a \neq 0\).

The solutions to the differential equation \(y' = ay + b\) are the functions defined on \(\mathbb{R}\) by: $$ \textcolor{colorprop}{f(x) = K e^{ax} - \dfrac{b}{a}} \quad \text{where } K \in \mathbb{R} \text{ is a constant.} $$

The solutions to the differential equation \(y' = ay + b\) are the functions defined on \(\mathbb{R}\) by: $$ \textcolor{colorprop}{f(x) = K e^{ax} - \dfrac{b}{a}} \quad \text{where } K \in \mathbb{R} \text{ is a constant.} $$

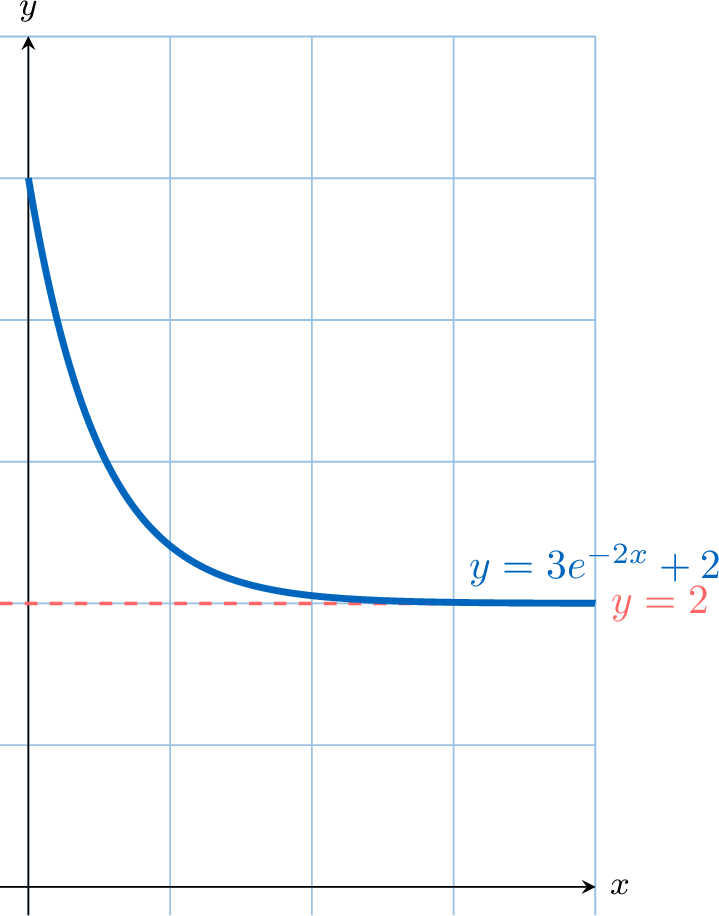

Example

Solve the differential equation \(y' = -2y + 4\) with the initial condition \(y(0) = 5\).

- General solution: The equation is of the form \(y' = ay + b\) with \(a = -2\) and \(b = 4\).

We calculate the constant term: \(-\dfrac{b}{a} = -\dfrac{4}{-2} = 2\).

The general solutions are: $$ y(x) = K e^{-2x} + 2 \quad \text{where } K \in \mathbb{R} $$ - Particular solution: Using the initial condition \(y(0) = 5\): $$ K e^{-2(0)} + 2 = 5 \implies K + 2 = 5 \implies K = 3 $$ The unique solution is \(y(x) = 3e^{-2x} + 2\).